Delete Your Voice Recordings from Alexa, Google Assistant, Cortana and Siri

Google, Amazon, Apple, and Microsoft keep recordings of your smart speaker voice commands on their servers. Here’s how to delete them.

When you summon a digital assistant from one of the devices they reside in, your voice command is uploaded to the company’s server and saved to your account. Whether you’re using a smart speaker or your phone, here’s how to delete your voice recordings from Amazon, Google, Microsoft, and Apple servers to help protect your privacy.

Delete Alexa Voice Recordings

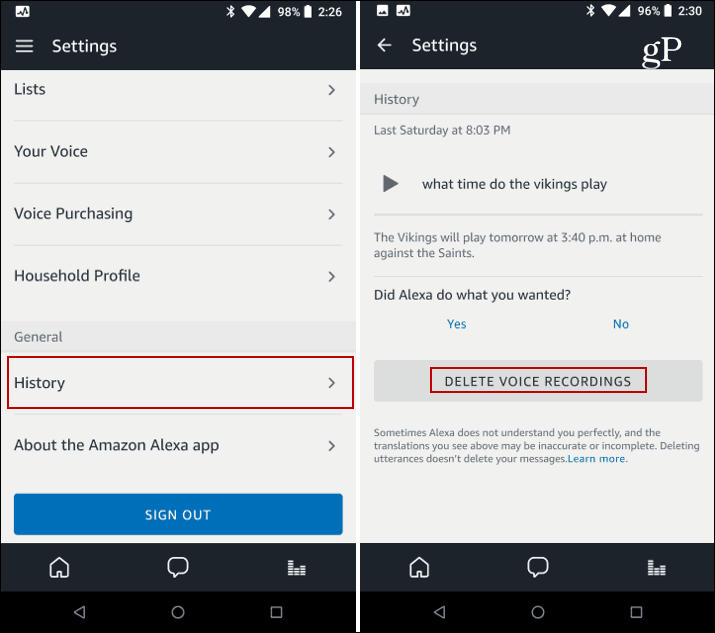

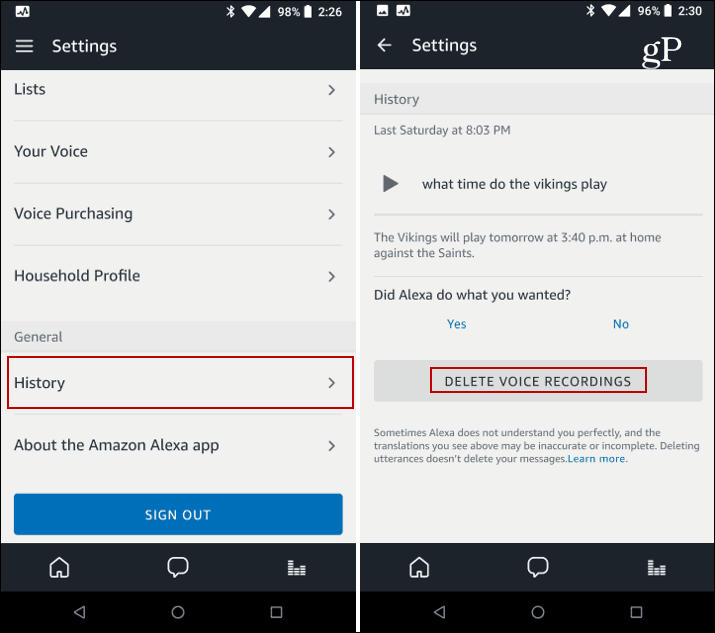

Here, I’m using the app on my Android phone, but you can use the Alexa site, too. Launch the Alexa app on your mobile device, tap Settings, and then scroll down and tap History. From there, you can delete individual recordings. However, if you want to get rid of them all, you need to sign in to your Amazon account on the site, choose your Alexa device, and delete all recordings. For full details, read: How to Delete All Alexa Voice Recording History.

You can find recordings of all your voice interactions with Alexa in the app.

It’s also worth mentioning that if you have a Fire TV and use the Alexa remote to do voice searches, you can delete those as well. Full details can be found in our article: How to Delete Amazon Fire TV Remote Voice Recordings.

Delete Google Assistant Voice Recordings

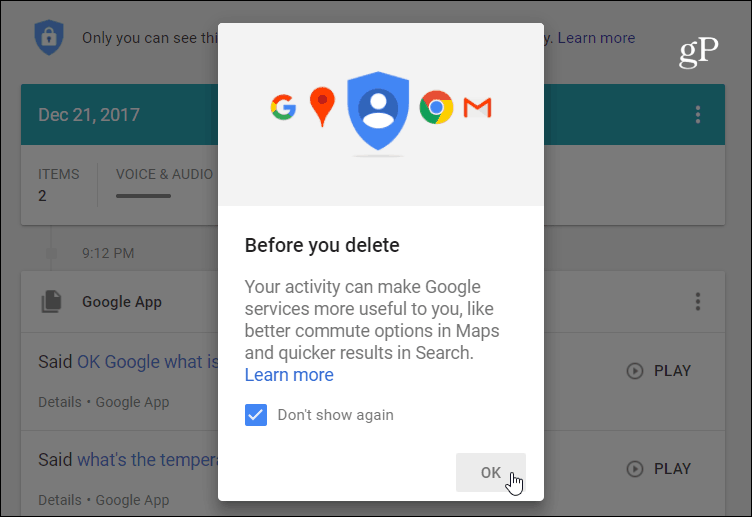

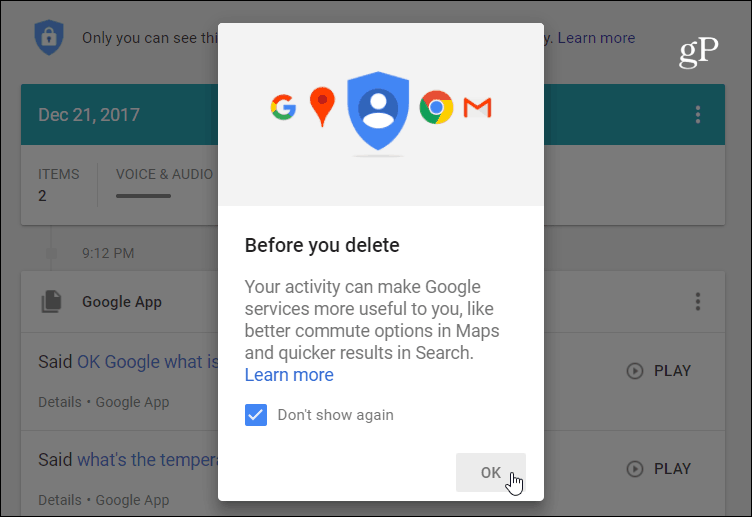

To get rid of the voice recordings you speak to your Google Home, head to the Google Voice Activity page on your PC or phone. There you will find a list of everything you’ve used Google Assistant for – that includes the speaker and your phone. Here, you can play them back just like with Alexa or delete them one by one or all in one fell swoop. Read our article: How to Delete Your Google Voice History for more details.

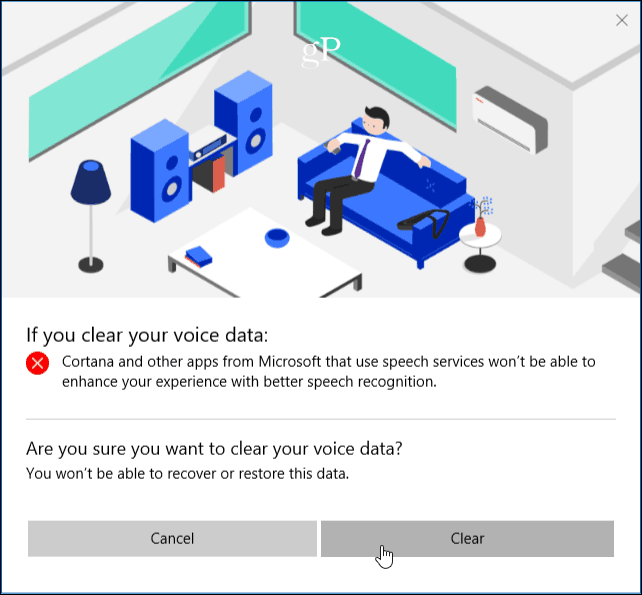

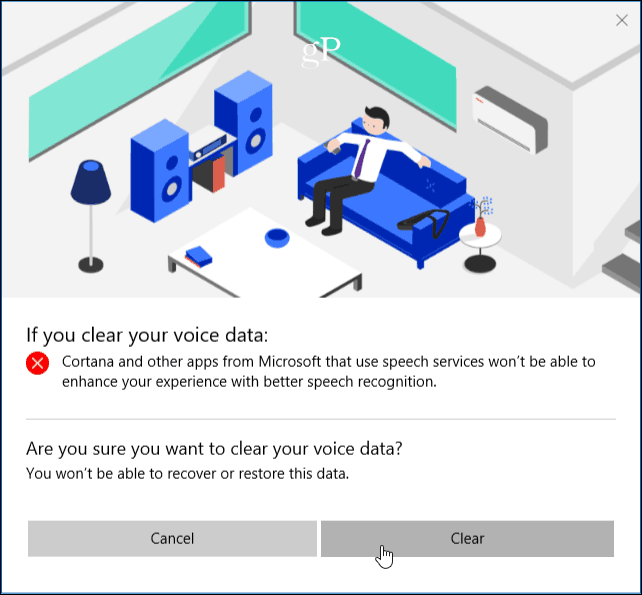

Delete Cortana Voice Recordings

For Cortana voice history, head to the Microsoft Privacy Dashboard Voice History section and sign in if you aren’t already. There, you will find a list of your voice recordings. I’ve found this to be the most interesting one, as Cortana on the Harman Kardon Invoke goes off prematurely far more than any other speakers. With Cortana on Windows 10, you can also delete other search items and limit how much information is collected.

Apple’s Siri Voice Recordings

Siri handles your voice interaction history in a much less user-friendly way compared to the other assistants. There isn’t a way to view and listen back to your voice recordings or delete individual ones. You need to turn off Siri for active listening. To delete your past voice interaction history from Apple servers, you need to turn off Voice Dictation, too. To do that, go to Settings > General > Keyboard swipe down, and turn off the “Enable Dictation” switch.

While these devices should only record what you say after you trigger its wake word, that’s not always the case. As you know, if a smart speaker is within listening distance of your TV or other audio sources (including a person), it wakes up and starts recording even when you don’t want it to. When I went through my archive of recordings, I found some interesting things. Sometimes, the recording was up to 30 seconds of audio from a movie or podcast. Also, when other people are in the room if a speaker is triggered, it will record what everyone is saying. When I made a phone call with Alexa, things said on my end were recorded. You can probably conclude what the privacy issue is here.

1 Comment

Leave a Reply

Leave a Reply

Alexa Setup

April 29, 2020 at 2:02 am

Here you get to know about Alexa App, Alexa Setup, Echo dot Setup, Amazon Alexa setup, and other Echo devices you need to do just a few steps then you can do all the process. Firstly, you need to Download Alexa App from your devices App store or you can also Download Alexa app from alexa.amazon.com. After that, you need to insert amazon id in Alexa app. Now open Alexa App and choose your Echo Devices and connect Alexa to WiFi. Once Echo Dot Setup completed then you can enjoy it in many ways. such as listen to the news, Music, any other information which you like and do many more.